Table of Contents

This paper is Part 2 of a series; Part 1 is available here.

Executive Summary

Kentucky’s decision to replace the ACT with the SAT as its statewide 11th-grade college admissions exam represents a significant and poorly managed change to the state’s assessment system—one that weakens accountability, raises questions about statutory compliance, and demands immediate legislative and state school board attention.

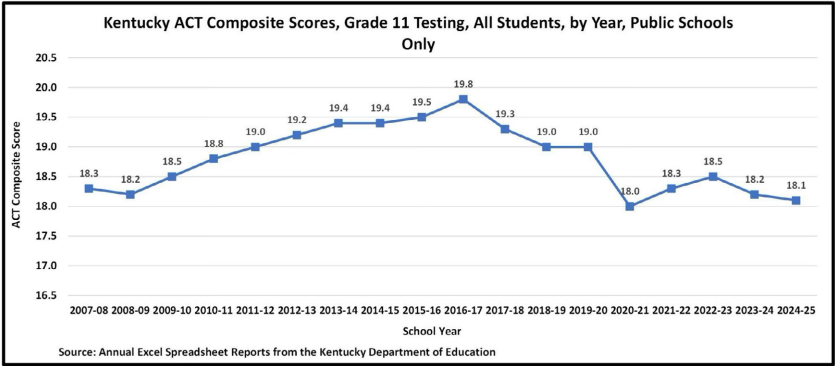

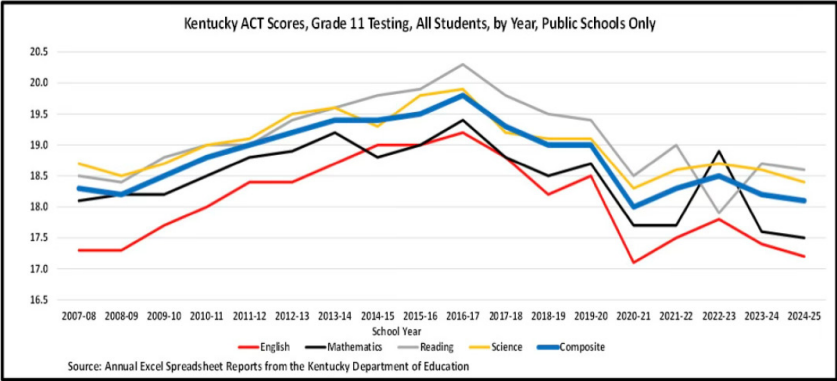

For nearly two decades, Kentucky’s annual administration of the ACT college entrance test to all 11th grade students has produced the longest continuous and comparable measure of student performance in the state. That trend line shows a sustained decline in achievement since 2016-17, including across English, mathematics, reading, and science (see Figures 1 & 2). These results stand in sharp contrast to more favorable outcomes reported on the Kentucky Summative Assessments (KSA) for high schools, raising serious concerns about how accurately current state assessments reflect true student performance.

Figure 1

Figure 2

Despite the importance of this information, ACT results were omitted from key public communications and largely excluded from state board discussions in late 2025. At the same time, the Kentucky Department of Education (KDE) moved forward—without required stakeholder involvement—to replace the ACT with the SAT beginning in 2026, effectively ending an 18-year trend line.

This is not a routine substitution. The ACT and SAT are fundamentally different tests, and widely accepted concordance methods do not support constructing a valid longterm trend across them. As a result, Kentucky is losing its most reliable tool for tracking changes in student performance over time—particularly at a moment when policymakers need clear evidence about recovery from the impacts of COVID.

Educate your inbox. Get the Bluegrass Institute's once-weekly policy update.

The process used to make this decision also raises serious governance concerns. Kentucky law requires the Kentucky Board of Education to oversee the assessment system and mandates consultation with multiple advisory bodies. Available evidence suggests that these requirements were not fulfilled prior to the decision to switch tests.

In addition, the SAT does not directly assess all subject areas required by statute— specifically English and science—as distinct tested domains. This raises unresolved questions about whether the new assessment complies with Kentucky law.

The consequences of this decision extend beyond governance. The abrupt transition gives districts and students limited time to adjust to a different test format, potentially disadvantaging students who had been preparing for the ACT, particularly in less-resourced districts.

Taken together, these issues point to a breakdown in transparency, oversight, and accountability in Kentucky’s assessment system. The state is losing its most valuable long-term performance measure at a time when that information is most needed, while the replacement assessment is not directly comparable and does not appear to meet statutory requirements.

Legislators and the Kentucky Board of Education should act to restore proper oversight, ensure compliance with state law, and preserve the ability to accurately measure student performance over time. Without such action, Kentucky risks operating an assessment system that provides less clarity, less accountability, and less confidence for policymakers and the public alike.

Background

Kentucky law clearly establishes both the structure of the state’s assessment system and the responsibilities for overseeing it. Those requirements are especially important in light of findings from Part 1 of this report series, which raised concerns about incomplete data presentation, omitted results, and inconsistencies in trends between state and national assessments.

Under KRS 158.6453, the Kentucky Board of Education is responsible for creating and implementing a balanced statewide assessment program. The statute also requires that all 11th-grade students take a college admissions examination that assesses four specific subject areas: English, reading, mathematics, and science.

This requirement is explicit. However, as discussed in Part 1 and expanded upon here, the SAT does not directly assess all of these areas as distinct components. While it includes reading, writing, and mathematics, it does not provide a standalone science assessment and does not report a separate English score, either. No clear explanation has been provided to date demonstrating how the SAT can fully satisfy the statutory requirements.

KRS 158.6453 also requires that the assessment system be developed with input from multiple entities.5 Specifically, the Board must seek advice from:

- the Office of Education Accountability (OEA),

- the School Curriculum, Assessment, and Accountability Council (SCAAC),

- the Education Assessment and Accountability Review Subcommittee (EAARS), and

- the department’s technical advisory committee.

These consultations are not optional. They are built into statute to ensure that major decisions about the state’s assessments are transparent, well-informed, properly coordinated with other interested parties and subject to appropriate oversight.

The role of the Education Assessment and Accountability Review Subcommittee (EAARS) is particularly significant. Under KRS 158.647, EAARS is charged with advising the Board on implementation of the state’s assessment and accountability system, providing an additional layer of legislative oversight.

A change as significant as replacing the ACT with the SAT—especially one that eliminates nearly two decades of comparable performance data—would reasonably be expected to involve the EAARS and all of the other entities. However, available evidence indicates that such involvement did not occur. There is no clear record of meaningful consultation with EAARS or the other required groups, and the Kentucky Board of Education itself does not appear to have substantively reviewed or approved the change in its 2025 meetings.

The importance of these safeguards is underscored by events during the state board’s December 2025 meeting, as discussed in Part 1. That report showed that discussion of key assessment data—the ACT results—was completely omitted from the results presented to the state board in December 2025. This likely occurred because the ACT trends conflicted with the more favorable picture the Kentucky Department of Education presented based primarily on the Kentucky Summative Assessments (KSA). Clearly, the board was denied a full and thorough picture of the state’s real performance.

In light of those omissions, the role of advisory bodies and the Board itself becomes even more critical to ensuring that decisions are based on complete and accurate information about the 2025 assessment results.

Findings from Part 1 raise broader concerns: major decisions affecting Kentucky’s assessment system appear to be occurring without full transparency, without complete consideration of available data, outside of compliance with state law and without the level of oversight required by law.

The Limits of Comparing the ACT and SAT

Replacing the ACT with the SAT is not a simple substitution. The two tests differ in design, content, and scoring—and those differences have important implications for accountability.

Both testing organizations acknowledge these limitations. According to the Guide to the 2018 ACT®/SAT® Concordance:

The ACT and the SAT are different tests. The ACT and the SAT measure similar, but not identical, content and skills. A concorded score is not a perfect prediction of how a student would perform on the other test.

The guidance further explains the concordance is intended only for comparing individual performances at approximately the same point in time—not for constructing long-term trends. It explicitly warns:

Concordances are used to compare individual scores, not aggregate scores. Users should avoid converting aggregate scores (e.g., mean, median, ranges) using concordance tables, as this could introduce additional sources of error.

This has direct implications for Kentucky. Nearly two decades of ACT data cannot be reliably converted into SAT equivalents. The state’s most consistent measure of student performance cannot be preserved using the concordance.

The problem is compounded by timing. The most recent concordance was developed in 2018, before very recent major changes to the SAT occurred. Even if the revised SAT Kentucky is going to use wasn’t going to be different from earlier SAT versions, the available concordance is dated.

The result is clear: switching to the SAT breaks Kentucky’s only long-term, comparable trend line—at a time when policymakers most need consistent data to evaluate recovery from the impacts of COVID.

The SAT Kentucky Will Use Is a Redesigned Assessment

The SAT Kentucky plans to administer is not simply a different version of a familiar test. It is a redesigned, digital, adaptive assessment that differs in structure, content, and reporting.

Digital and Adaptive Format

Under the state’s contract, the SAT will be administered primarily in digital form, with paper testing limited to certain students with disabilities or exceptional circumstances.9 This represents a fundamental shift in testing conditions that is going to favor students with better digital skills.

Per KDE’s News Advisory 25-237, the new SAT is also adaptive. Students do not all receive the same questions. Instead, performance on the first set of questions determines the difficulty of later questions. As a result, early mistakes can limit a student’s opportunity to demonstrate higher-level proficiency.

Obviously, students will not all receive the same test. This will complicate–perhaps totally prohibit–the ability to provide truly equitable scoring.

Left unexplained is how special students who take a paper and pencil test can possibly take anything close to an equivalent to the predominant digital test. Equivalent scoring for these students is even more unlikely.

The new SAT is also shorter than past versions, with fewer questions overall. This increases the impact of each response. As one analysis notes:

With fewer questions on the test, small mistakes have a bigger impact. This is especially true for students aiming for high scores—missing just one or two additional questions can shift a score significantly.

Changes in Rigor and Content

The shorter format of the new SAT raises concerns about the depth and rigor of what is being assessed. According to the James G. Martin Center for Academic Renewal:

…in the new ‘Reading and Writing’ section of the test, they shortened reading passages from 500-750 words all the way down to 25-150 words, or the length of a social-media post, with one question per passage.

The same analysis notes that these changes necessarily resulted in the removal of longer, more complex reading material:

This resulted in the elimination of significant portions of SAT’s previously used reading material, including “passages in the U.S. founding documents/Great Global Conversation subject area,” because of their “extended length.” Nevertheless, the College Board takes the view that the rigor of the Reading and Writing segment is 7 unchanged. They claim in the assessment framework that the eliminated reading passages are “not an essential prerequisite for college” and that the new, shorter content helps “students who might have struggled to connect with the subject matter.

These changes raise legitimate questions about whether the new SAT assesses reading comprehension and analytical skills at the same depth as earlier formats.

Reduced Diagnostic Value

At the same time, reporting from the SAT has become less detailed. According to Open Door Education:

The lack of detailed breakdowns makes it harder for students to identify specific areas for improvement. This means that targeted prep requires additional assessment tools beyond the official reports.

This reduction in diagnostic information limits the usefulness of the test for educators and students seeking to improve performance.

Subject Coverage and Validity Concerns

The shift to the SAT raises important questions about how required subject areas are assessed. Kentucky law requires testing in English, reading, mathematics, and science.

The SAT directly assesses reading, writing, and mathematics, but it does not include a standalone science test.

Limited science testing

Instead, the SAT proposes to generate a science-related score based on performance on selected questions embedded within other sections. This differs from the ACT, which includes a dedicated science assessment designed specifically to measure those skills.

This distinction matters. A derived score based on a limited number of embedded items is not equivalent to results from a separate and focused assessment. Concerns are heightened by the digital SAT’s shorter format. With fewer total questions, and questions of shorter length than previous SAT versions, any science-related score will be based on a very limited evidence base, raising serious questions about reliability and validity.

In addition, due to the limited number of questions on the digital SAT, it seems inevitable that deriving anything close to a valid science score will require a large number of questions in the reading and writing sections to be biased towards science topics. That puts students better versed in subjects like civics, history and other non-science areas at a disadvantage, making the reading and writing sections more like a science test than a true evaluation of reading and writing in general.

No unique English score

The SAT does not report English as a distinct subject area. This further complicates compliance with statutory requirements which are aimed at producing a specific score for student performance in areas such as grammar and punctuation. This statutory requirement was likely inserted because of experiences in the early days after the Kentucky Education Reform Act of 1990 was passed. Back then, progressive educators downplayed the importance of the technical aspects of writing. It became clear to legislators that was not a good idea.

Taken together, these differences make it difficult to determine whether the new digital SAT fully meets Kentucky’s legal requirements. More importantly, there is a more fundamental concern: will the assessment system continue to measure the subjects the state needs to assess, and will the resulting scores provide a sound basis for accountability?

How the Transition Occurred

The transition from the ACT to the SAT was not only a major policy change—it was carried out in ways that raise concerns about transparency, data reporting, and oversight. These issues involve both how the decision was made and how student performance has been communicated. Together, they call into question whether policymakers have been given a complete and accurate picture of educational outcomes.

1. Lack of Transparency in the Transition

The transition from the ACT to the SAT was not conducted in a transparent, collaborative or well-documented manner.

The process began in early 2025, when the Kentucky Department of Education initiated procurement for the state’s required 11th-grade college admissions examination. This apparently occurred without public discussion with the state board or clear notice to other key stakeholders. By June 13, 2025, a contract had already been executed to replace the ACT with the SAT.

Two weeks later, June 27, 2025, the Kentucky Board of Education was informed by email (not in an open meeting of the board) and another three days went by before district assessment coordinators were informed. At this point, there was little to no awareness among district leaders, the media, or the public that such a significant change was under consideration—let alone finalized. This lack of transparency extended to entities identified in statute, including the Kentucky Board of Education and advisory bodies such as EAARS, OEA, and SCAAC, none of which appear to have been meaningfully involved prior to the decision—despite statutory requirements.

Also left uninformed until after the contract was signed was the Kentucky Council on Postsecondary Education. The council’s Amanda Ellis wrote on July 1, 2025, that higher education leaders “were unaware of this change and are surprised to learn of the quick implementation.” Ellis also stated that in the past the council had been advised about the requests for proposals, so “this has become a very big deal for our campuses.” Ellis additionally wanted to know which higher education representative had served on the assessment review committee required to advise on such issues by state law. She also mentioned that the change in tests would impact the Kentucky Higher Education Assistance Authority, which has an important role in awarding state-funded scholarships.

Coverage in the Courier-Journal made it clear that local school districts were also left out of the process. Awareness of the change emerged gradually and informally. District personnel first learned of the shift through the email sent to assessment coordinators— not through formal communication to superintendents or a public announcement.

At least some district leaders were highly unhappy about the change. Elizabethtown Independent Schools superintendent Paul Mullins pointed out that KDE’s change would impact many years of investment in ACT support activities for things like practice exams and training. Jim Flynn, with the Kentucky Association of School Superintendents, said, “there really wasn’t any warning about it.” He added that districts now faced “a pretty short runway” to get students up to speed on a new assessment.

Public disclosure followed only well after the decision had effectively been made. A statewide advisory was not issued until September 2025—more than three months after the contract was signed.

This rushed and non-transparent sequence of events has practical consequences. The ACT and SAT differ in structure, pacing, and content. Preparing students for one does not fully prepare them for the other. Adjusting instructional strategies, test preparation, and student expectations requires time and resources—both of which were constrained by the delayed communication of this change. Districts with fewer resources are particularly challenged under such conditions.

In short, a major change to Kentucky’s assessment system—one that affects every public high school student—was implemented with limited transparency, minimal stakeholder involvement, and a compressed timeline for adaptation.

2. Limited Disclosure of ACT Results

At the same time the transition to the SAT was unfolding, the presentation of assessment data raises additional concerns about transparency and completeness.

When statewide results were released in November 2025, official communications focused heavily on Kentucky Summative Assessment (KSA) outcomes. However, the official news release did not include any ACT results even though those results are a required component of the state’s assessment system and represent its longest-running trend line of student performance.

The ACT data were technically available through the Kentucky School Report Card system, but that availability was not prominently reported or emphasized in official releases. As a result, the ACT results received limited attention from both policymakers and the media, who were largely left unaware that such data was even available.

This omission is significant. At the same time the state was preparing to discontinue this assessment, the most recent ACT results showed continued declines, not the improvement KDE tried to claim in the December 2025 state board meeting.

Through January 2026, ACT results continued to be omitted from state board discussions, even as other data—including even NAEP results from prior years—were presented. As documented in Part 1 of this report series, this selective omission occurred in a context where ACT trends conflicted with the more favorable picture presented by the KSA.

As earlier discussed, the ACT results did show a sustained decline in student performance since 2016–17 across English, mathematics, reading, and science (see Figure 2). In contrast, KSA results suggest modest improvement. Presenting one set of results without the other creates an incomplete—and potentially misleading—understanding of system performance.

The timing of these omissions is also notable. The state minimized the visibility of ACT results at the same time it was preparing to replace the ACT entirely. As a result, one of the few independent and longitudinal measures of student performance was both underemphasized and ultimately removed.

The pattern extended into early 2026. At its January meeting, the Board did not review ACT results and deferred further discussion until May—after the legislative session had concluded.

3. Incomplete Presentation of Trends

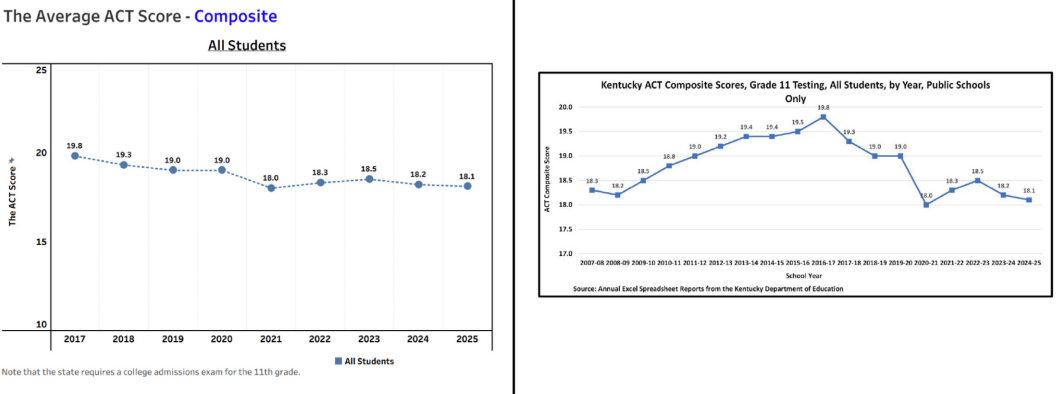

Despite the state board’s January announcement that the ACT results would not be examined until May, the February 2026 board agenda included a presentation on the ACT. However, the ACT scoring information presented to the board in February was incomplete.

The materials provided to the Board focused only on results from 2017 to 2025, totally omitting earlier years when performance had improved (see Figure 3).

Figure 3

The truncated presentation provided to the state board removes really important information. The full ACT trend shows there were notable earlier gains followed by subsequent, serious declines that have completely erased those earlier gains. By excluding the earlier period, the materials presented to the board prevented the members from understanding the full, and very disappointing, trajectory of student performance.

Given the ACT’s 36-point scoring scale, the decline from a peak composite score of 19.8 in 2016–17 to 18.1 in 2024–25 is substantial. Without the full trend in view, the magnitude and persistence of that decline are not apparent. Kentucky lost a decade of earlier progress on ACT, but the state board was denied that information.

For policymakers responsible for oversight, access to complete trend data is essential. Partial presentations can obscure important patterns and weaken informed decision-making.

This situation raises an important concern: whether Kentucky’s assessment system is providing a full and balanced picture of student outcomes, or whether key, but disturbing, data are being suppressed at the moment they are most relevant.

A Pattern That Raises Concern

Each of these issues—the lack of transparency in the transition process, the limited visibility of ACT data, and the incomplete presentation of trends—raises concerns on their own.

Taken together, they point to a broader pattern.

A major policy decision was made with limited public explanation and minimal stakeholder involvement.

At the same time, key performance data were not consistently presented or emphasized.

Oversight processes required by statute do not appear to have functioned as intended.

An appearance has been created that troubling assessment data has been discarded to portray a brighter picture than the full evidence can support.

Some of these issues may simply reflect poor administrative decisions or communication problems. However, the cumulative effect creates a picture of a system in which decision-making, data reporting, and oversight are not fully aligned with expectations for transparency and accountability.

For legislators and the Kentucky Board of Education, the central issue is not simply which test is used. It is whether the state’s assessment system continues to provide a clear, accurate, and trustworthy picture of student performance.

At present, that question remains unresolved.

Recommended Actions

The issues outlined in this report point to a major problem: Kentucky’s assessment system is not operating with the level of transparency, stability, and oversight required to support sound policymaking and may not be in compliance with state law.

At a time when lawmakers and education leaders most need clear and reliable information—particularly to understand recovery from the impacts of COVID—the state has eliminated its longest-running and most consistent measure of student performance. At the same time, key data have been underemphasized or omitted, and a major change to the assessment system appears to have been made without the full involvement of the entities required by law.

These concerns require action from both the Kentucky Board of Education and the General Assembly.

For the Kentucky Board of Education

The Board should immediately reassert its statutory responsibility over the state’s assessment system.

First, the Board should conduct a formal review of the decision to replace the ACT with the SAT, including whether the process complied with KRS 158.6453 and whether required advisory bodies were consulted.

Second, the Board should require a clear and public determination of whether or not the SAT fully meets statutory requirements to assess English, reading, mathematics, and science. If that standard cannot be met, the Board should reconsider the use of the SAT.

Third, the Board should ensure full transparency in the reporting of assessment data. All major statewide results—including college admissions testing—should be consistently presented in board meetings and included in official public releases. Presentation of partial, and slanted, results must not continue.

Finally, the Board should establish clear expectations for complete and accurate data presentation going forward, including the use of full trend lines and appropriate statistical interpretation.

In short, the Board must move beyond a passive role and actively govern the assessment system it is charged with overseeing.

For the Kentucky General Assembly

The legislature, which holds ultimate responsibility for education in the commonwealth, should take steps to restore accountability, clarity, and public confidence.

First, the General Assembly should investigate the process that replaced the ACT with the SAT, including the timeline, procurement process, level of board involvement, and whether statutory consultation requirements were followed.

Second, legislators should determine whether the SAT complies with existing statutory requirements. If it does not, corrective action should be required.

Third, the legislature should act to preserve or restore meaningful longitudinal accountability. This may include requiring a return to the ACT or establishing a clear, legislatively approved plan to maintain comparable performance measures over time.

Fourth, the General Assembly should consider creating an independent assessment oversight body to develop assessments, evaluate results and report directly to policymakers and the public. Separating responsibility for assessment from responsibility for the programs to improve education would reduce conflicts of interest and improve credibility.

Finally, legislators should clarify governance roles within the assessment system to ensure that major decisions cannot be made without proper oversight and transparency.

Conclusion

Kentucky cannot afford an assessment system that obscures more than it reveals. Without clear, consistent, and credible measures of student performance, neither policymakers nor the public can accurately judge whether the state’s education system is improving.

Restoring transparency, preserving meaningful trend lines, and enforcing proper oversight are essential steps toward ensuring that Kentucky’s assessment system serves its intended purpose: providing an honest and reliable picture of how well the state’s students are being prepared for the future.